MIT researchers are using analytics to identify a way to predict when students are likely drop online courses.

Supercomputers Unleash Big Data's Power

Supercomputers Unleash Big Data's Power (Click image for larger view and slideshow.)

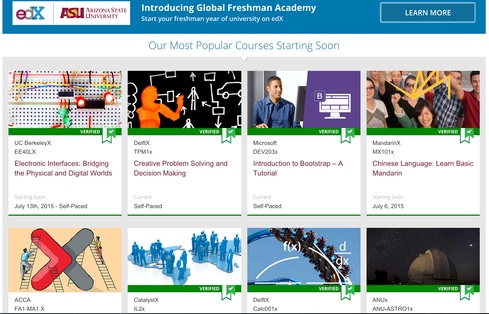

Millions of people have joined MOOCs -- massive open online courses -- but only a small fraction of these students end up earning certificates of completion. According to educational researcher Katy Jordan, the average completion rate for MOOCs is about 15%.

To help understand why online learners fail to follow through -- which matters to educators, online course designers, policymakers, students, and organizations paying for worker training -- Kalyan Veeramachaneni, a research scientist at MIT's Computer Science and Artificial Intelligence Laboratory, and Sebastien Boyer, a graduate student in MIT's Technology and Policy Program, have developed a technique that can help predict when students will drop an online learning course (an event they call "stopout").

The researchers' paper, "Transfer Learning for Predictive Models in Massive Open Online Courses," was presented last week at the International Conference on Artificial Intelligence in Education, held in Madrid.

While those offering online courses can and do employ various real-time analytic techniques to understand student behavior in a specific course, Veeramachaneni and Boyer focused on transfer learning: Applying data from previous courses to refine a predictive model that can be used in any course. The research represents a way to generalize analytic models Veeramachaneni described last year.

"Through this study, we are taking the first steps toward understanding different situations in which one can transfer models/data samples from one course to another," the paper states.

In an email, Veeramachaneni explained that the research can help the way educators intervene to encourage learners with reminders, motivational messages, and other personalized feedback. "Because predictive models we develop also give a measure of how confident we are in our prediction, it helps to prioritize such interventions," he said. "At a macro level, it can identify a pattern -- for example, perhaps after a certain video/homework quite a number of learners are disengaging with the course and are likely to stopout/drop."

[Read how IBM and other researchers are looking to create a smarter lake.]

Veeramachaneni said that one of the biggest predictors of "stopout" is "pre-deadline submission time," the length of time between when a student begins work on problems and the deadline. Another valuable predictor is time spent working on weekends. Lack of weekend work on assignments may indicate that a student is busy and less likely to complete the course.

Predictive data of this sort, Veeramachaneni said, has potential to increase feedback from learners, by identifying when to send surveys that will receive a response. Those who drop courses become less likely to respond to surveys as time passes, he said.

About the Author(s)

You May Also Like