Engineers at Sapphire Now discussed the potential and limitations for SAP S/4HANA as a platform for managing an enterprise Internet of Things and the big data that strategy requires.

IoT World: Separating Smart And Dumb Things

IoT World: Separating Smart And Dumb Things (Click image for larger view and slideshow.)

SAP has been very clear that their direction for the future lies along the path of its HANA in-memory technology. At the Sapphire Now 2015 conference this month, SAP execs were also very clear that they want SAP S/4HANA to be a major player in the enterprise Internet of Things. And that's where things get very complicated.

Pretty much everyone agrees that the Internet of Things (IoT) will send a tidal wave of data into the enterprise. Billions of sensors and controllers, all wanting to talk to some other machine for analysis and instruction, create trillions of transactions and data events. How can an organization reconcile built-for-speed in-memory computing with huge data quantities? In a series of conversations at Sapphire, SAP engineers and managers shared their thinking about how to do that.

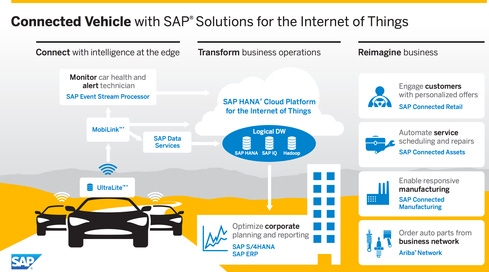

First, SAP engineers candidly admit that solving the IoT (and related Big Data) issues remains a work in progress. There are products in place -- and customers using those products -- but engineers recognize they need to offer more. The systems in place, though, start with decisions made close to the sensors and edge systems.

SAP says that many control decisions don't need to be made by systems at the enterprise core; they can be handled by concentration and control systems at the edge. SQL Anywhere is a lightweight database manager that can be placed on a single-board computer. This database will gather the data from an array of sensors and make moment-to-moment control changes to those systems.

Both the change data (data on error conditions or major changes) and full sensor data is uploaded to the enterprise database using Mobilize -- a product that will queue the data and upload it in bursts when system resources and connectivity are available. At this point, the Internet of Things starts looking very much like a time-critical big data problem. If you decide to do processing in the core rather than at the edge, you face the obstacle of having to get decisions back to the edge in near real time.

Once the sensor and controller data is uploaded, data-tiering goes into effect. In the conversations I had with engineers they spoke of the critical importance of deciding whether data is "hot," "warm," "cold," or something between one of these increments. (Although, to be honest, why an organization would rely on "tepid" data is beyond me.) Some data can be categorized based simply on its origin or content. Other data will be sorted based on analysis that occurs in the enterprise core. This analysis, the engineers said, can happen most rapidly in a HANA database.

[ Shocking growth or overly optimistic prediction? IoT Market Will Grow 19 Percent in 2015, IDC Predicts. ]

A HANA database that references data sitting in other databases (which can include Hadoop, MongoDB, Oracle, or just about any other commercial database) can use SAP's Smart Data Access -- a data virtualization feature that lets the HANA control structures see data in "virtual data tables" that span multiple databases. Smart Data Access has been around since SAP HANA SP 6, and its limitations have been sorted out reasonably well. The situations in which Smart Data Access isn't sufficient center on the minute-by-minute control operations required by the Internet of Things. (Think about trying to control a vehicle or adjust a sensitive industrial chemical process from a remote site.)

SAP expects to use a concept called "streaming" to use HANA for analysis without assuming that all data is hot until proven otherwise. Streaming will, essentially, use a HANA instance as a high-speed Hogwarts Sorting Hat to determine whether the data coming in should be stored in another HANA database or sent to a cooler location for future analysis at a somewhat more leisurely pace. This step is the one that's critical for keeping big data analysis moving along at HANA speed -- and the one that can be hardest to understand.

"Streaming" in this context is depositing the incoming data in a HANA database long enough for the sorting algorithms to do their jobs. Once the system gives the data its priority level, it's sent on its merry way, clearing space for the next data bits to stream in. As with all plans of this sort, a great deal of the system's performance depends on buffering, caching, and so on, and it's within those pieces of software and service where some of the rough edges lie. Within the next few months, though, the engineers are fairly confident that they'll be demonstrating full speed and capability of all the connections and translations that need to happen.

Virtual Tables unite data stored in different database structures.

Components in the SAP big data universe won't begin and end with HANA and Hadoop. Cloudera is one product that the SAP engineers mentioned in many discussions when they talked about mechanisms for connecting a HANA instance to Hadoop. Cloudera is a Hadoop-based data management system that includes the central pieces of Hadoop, software to let data flow between Hadoop and other data sources, and a management structure to tie everything together. Cloudera already has connectors to many of the databases and third-party analytics packages that an organization might want to build into a full big data solution. SAP BI is also likely to be a part of the system since the BI suite already includes many of the analytics bits that will be used to make sense of the data waves rolling in.

Given the SAP IoT customer base, the "things" in question are much more likely to be on an industrial shop floor than attached to a consumer's wrist. The systems controlled will probably be manipulating industrial processes rather than home air conditioning. Even so, the data volumes will be huge, and the time windows in which systems must respond to problems will be small. With HANA, SAP has made a series of key early steps to power their customer and partner plans. If the company can polish and fit the last of the pieces it's promising, SAP S4/HANA will be a formidable presence in the IoT market.

[Did you miss any of the InformationWeek Conference in Las Vegas last month? Don't worry: We have you covered. Check out what our speakers had to say and see tweets from the show. Let's keep the conversation going.]

About the Author(s)

You May Also Like