Data Science on a Budget: Audubon's Advanced Analytics

Over 100 years of crowdsourced data and machine learning help Audubon predict climate change's effect on where birds will live in the future. Here's how a tiny team of data scientists makes a big impact.

On Memorial Day weekend 2038, when your grandchildren visit the California coast, will they be able to spot a black bird with a long orange beak called the Black Oystercatcher? Or will that bird be long gone? Will your grandchildren only be able to see that bird in a picture in a book or on a website?

A couple of data scientists at the National Audubon Society have been examining the question of how climate change will impact where birds live in the future, and the Black Oystercatcher has been identified as a "priority" bird -- one whose range is likely to be impacted by climate change.

How did Audubon determine this? It's a classic data science problem.

First, consider birdwatching itself, which is pretty much good old-fashioned data collection. Hobbyists go out into the field, identify birds by species and gender and sometimes age, and record their observations on their bird lists or bird books, and more recently on their smartphone apps.

Audubon itself has sponsored an annual crowdsourced data collection event for more than a century -- the Audubon Christmas Bird Count -- providing the organization with an enormous dataset of bird species and their populations in geographies across the country at specific points in time. The event is 118 years old and one of the longest data sets for birds in the world

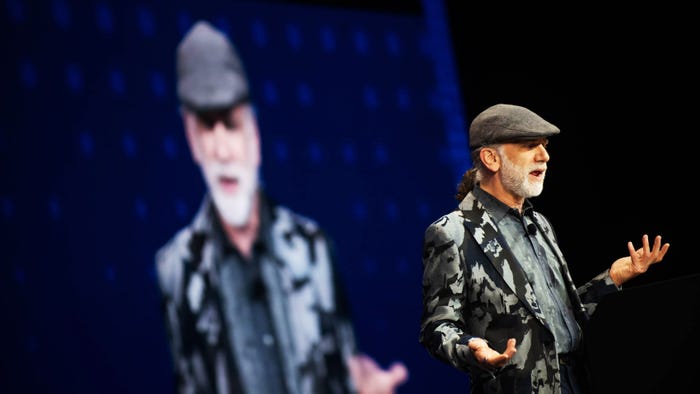

That's one of the data sets that Audubon used in its project that looks at the impact of climate change on bird species' geographical ranges, according to Chad Wilsey, director of conservation science at Audubon. He spoke with InformationWeek in an interview. Wilsey is an ecologist, and not trained as a data scientist. But like many scientists, he uses data science as part of his work. In this case, as part of a team of two ecologists, he applied statistical modeling using technologies such as R to multiple data sets to create the predictive models for future geographical ranges for specific bird species. The results are published in the 2014 report, Audubon's Birds and Climate Change. Audubon also published interactive ArcGIS maps of species and ranges to its website.

The initial report used Audubon's Christmas bird count data set and the North American Breeding Bird Survey from the US government. The report assessed geographic range shifts through the end of the century for 588 North American bird species during both the summer and winter seasons under a range of future climate change scenarios. Wilsey's team built models based on climatic variables such as historical monthly temperature and precipitation averages and totals. The team built models using boosted regression trees and machine learning. These models were built with bird observations and climate data from 2000 to 2009 and then evaluated with data from 1980 to 1999.

"We write all our own scripts," Wilsey told me. "We work in R. It is all machine learning algorithms to build these statistical models. We were using very traditional data science models."

Audubon did all this work on an on-premises server with 16-CPUs and 128 gigabytes of RAM.

But like any report you do, even if you are happy with it, you always want can think of ways to make it even better.

Improving the Report

So when it came time to update the report, Audubon wanted to do what many organizations do -- expand the data sets used -- to create a better, higher-resolution picture. Audubon partnered with the Cornell Lab of Ornothology and to leverage its eBird database of observations from birdwatchers -- another crowdsourced database.

"It's the largest bird database in the world," Wilsey said. "It's driven by community members who are passionate about birds."

Audubon also reached out to state and local agencies and pulled in another 20 data sets from them.

Wilsey said that the updated project has as many as 40 million records of observations.

The new project also provides a much more granular resolution of geographies. In the first report, predictions were made based on distances of 10 kilometers. The new report (still in process and unpublished) makes predictions based on distances of 1 kilometer.

All the new data and greater granularity in geographic predictions created a much bigger capacity requirement for the project in terms of computing power, Wilsey told me.

"For each species we are spinning up a server that is equivalent to the server we had for the whole 2014 project," he said. Each of those might be running for 24 hours. When you are working to understand over 500 bird species, that's a lot of servers.

Although Wilsey's data science team has doubled in size this time around -- from two members to four members -- the team is still running things pretty much on their own, without support from IT or a data engineer. The team has "outsourced" that data engineering effort to a company and service called Domino Data Lab. Essentially, Domino Data Lab enables data scientists to automate the creation of elastic infrastructure to run their models in containers, either in the cloud or on-premises. That means work can be done faster, too, because multiple models can be run at the same time. Wilsey said it has enabled the team to spin up and spin down machines for particular jobs, provide data analysis workflows, and buy just the right capacity for the job, which saves money.

"It's essentially allowed us as ecologists to tackle behind-the-scenes computer science problems," Wilsey told me. What's more, it has improved the efficiency of the data science work itself. "It's decreased the time that we are spending running the analysis," he said.

Wilsey reports that the team has seen noticeable statistical improvement in terms of insights from the data with the new set up, and that Audubon has a better idea of how each species relates to its environment. Additionally, he said, the team has learned new things about some species.

Audubon does have some new results to share soon, Wilsey said, but Audubon is looking to publish those with a peer-reviewed journal and not InformationWeek. But stay tuned for details from this new project in the next 6 months.

About the Author(s)

You May Also Like

How to Amplify DevOps with DevSecOps

May 22, 2024Generative AI: Use Cases and Risks in 2024

May 29, 2024Smart Service Management

June 4, 2024