AWS Summit: New Cloud Services, Expanded EBS Choices

Amazon Web Services unveiled a security inspection and data transfer acceleration service, along with two new EBS options, at AWS Summit.

10 Cloud Jobs In Highest Demand Now

10 Cloud Jobs In Highest Demand Now (Click image for larger view and slideshow.)

Amazon Web Services announced new cloud services at its AWS Summit in Chicago this week, expanding the storage options available with Elastic Block Store (EBS) and adding Amazon Inspector and S3 Data Accelerator to its list of offerings.

Amazon Inspector is a security assessment service that can be applied to a customer's future Amazon workload while it's still under development on the customer's premises. It's been available in its preview phase for several months and became generally available April 19.

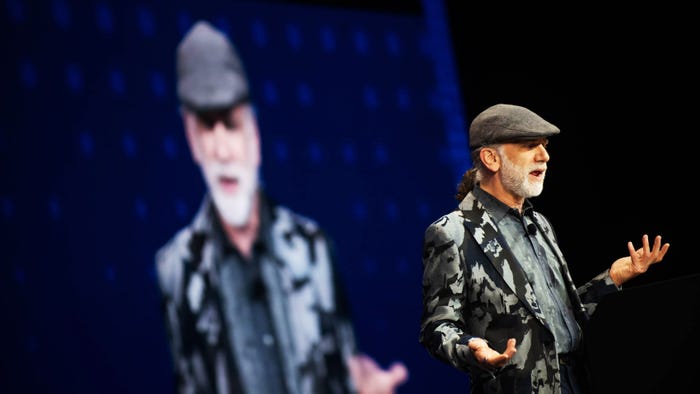

As agile and other development methods speed up application production, the effort required to determine the risk of exposures and vulnerabilities can fall behind the rapid code output, said Stephen Schmidt, chief information security officer at AWS. When applications are slated to be run on AWS, "customers have asked us to do the same rigorous security assessments on their applications that we do for our AWS services," he said in Amazon's announcement.

Inspector provides APIs for customers to connect the service to their application development and deployment process.

By doing so, the service can be programmatically invoked when needed and conduct assessments at the scale at which the application will run in the cloud -- something that can be difficult to do on-premises, where inspection and testing resources may be scarce. By having the service available, code can proceed toward deployment without needing to wait for the developer or central security staff to manually assess it, Schmidt added.

Learn to integrate the cloud into legacy systems and new initiatives. Attend the Cloud Connect Track at Interop Las Vegas, May 2-6. Register now!

The service can also be applied to existing deployments, giving applications a recheck when they undergo changes or increased use. An operator using the AWS tags that identify the workload can direct the service to an application via the AWS Management Console. The operator can commission certain tests off a list and set an amount of time they are to run.

Inspector includes the ability to look for a range of known vulnerabilities and collect information on how an application communicates with other Amazon services. It wants to know, for example, whether the application is using secure channels and the amount of network traffic between EC2 instances. Inspector has a knowledge base of secure operations with rule "packages," or sets of related rules, that it can apply to different situations. Amazon updates the knowledge base with the latest threat intelligence.

The assessment results, along with recommendations for what should be done to counter any vulnerabilities found, are presented to the application's owner. "Inspector delivers key learnings from our world-class security team," said Schmidt, and customers can correct issues prior to deployment instead of after an incident occurs.

[Want to learn how Amazon is handling the rise in IoT-generated data? Read Amazon Securing IoT Data With Certificates.]

The second service announced at the AWS Summit on April 19 was Amazon S3 Transfer Acceleration. It makes use of the Amazon edge network, used to distribute content efficiently to end-users from 50 different locations. The edge network supports both AWS's Cloudfront CDN and rapid answers to DNS queries from its Route 53 service.

Now edge network locations are also serving as data-transfer stations using optimized network protocols and the edge infrastructure to move data objects from one part of the country to another. The improvement in transfer speed is from 50% to 500% for cross-country transfer of large objects, Jeff Barr, AWS chief evangelist, wrote in a blog about the new service.

Barr said AWS has also increased the capacity of its Snowball data-transfer appliance from 50TB to 80TB. Snowball users load their data in encrypted form into the Snowball appliance, which is physically transported to an AWS data center. The data on a Snowball can't be decrypted until it reaches its destination, and 10 Snowballs can be simultaneously uploaded to a user account in parallel.

In addition to the two new services, AWS is launching two low-price storage options for running EC2 instances, or for use with big data projects on Elastic MapReduce clusters, Amazon's version of Hadoop.

They are both EBS options that seek to combine the attributes of solid state drive speed with the larger storage capacity and lower cost-per-gigabyte of hard disks. AWS makes use of both types of storage in the cloud to offer Throughput Optimized HDD and Cold HDD.

Throughput Optimized HDD is designed for big data workloads that could include, in addition to Elastic MapReduce, such things as server log processing; extract, transform, and load tasks; and Kafka, the Apache Software Foundation's high-throughput publish and subscribe system. It could also be used with data warehouse workloads. It will be priced at 4.5 cents per GB per month at AWS's Northern Virginia data center. That pricing compares to 10 cents per GB per month for general-purpose EBS storage based on solid state disks.

Cold HDD drops the price further to 2.5 cents per GB per month. It fits the same use cases as Throughput Optimized HDD, but would apply to jobs that are accessed less frequently, Barr wrote in another April 19 blog post.

Both options are defined by their MBs of throughput per second. Throughput Optimized HDD provides 250MB per second of throughput per TB of provisioned data, with a maximum of 500MB per second for 2TB. Likewise, Cold HDD allows 80MB per second of throughput for each TB of provisioned data, building to a max of 250MB per second for 3.1TB of provisioned data.

Both types offer a baseline performance and the ability to invoke a burst performance-level for short periods. Infrequent use of bursting builds up a "burst bucket" or a time credit for how long bursting may be invoked, the same as the standard types of ESB options, Barr said in the blog.

"We tuned these volumes to deliver great price/performance when used for big data workloads. In order to achieve the levels of performance that are possible with the volumes, your application must perform large and sequential I/O operations, which is typical of big data workloads," Barr explained in the blog.

That's because of the nature of the underlying drives, which can "transfer contiguous data with great rapidity," but will perform less favorably when called to conduct many small-volume, random access I/Os, such as those required by a relational database engine. If these storage options are used for that purpose, Barr acknowledged, they will be "less efficient and will result in lower throughput." General-purpose ESB based on SSDs would be a much better fit, he noted.

About the Author(s)

You May Also Like

How to Amplify DevOps with DevSecOps

May 22, 2024Generative AI: Use Cases and Risks in 2024

May 29, 2024Smart Service Management

June 4, 2024