Nvidia DRIVE PX 2 Boosts IQ Of Self-Driving Cars

DRIVE PX 2, the latest version of Nvidia's supercomputer for cars, enables a host of different functions that can help autonomous vehicles run safely and efficiently.

Google, Tesla, Nissan: 6 Self-Driving Vehicles Cruising Our Way

Google, Tesla, Nissan: 6 Self-Driving Vehicles Cruising Our Way (Click image for larger view and slideshow.)

Nvidia is launching the DRIVE PX 2, a tablet-sized computing system designed for in-vehicle artificial intelligence (AI). The graphics chipmaker unveiled its updated autonomous car technology right before the official start of the 2016 CES expo in Las Vegas on Jan. 4.

The computer utilizes deep learning on Nvidia's graphics processing units (GPUs) to create 360-degree situational awareness around the car, which helps the car determine where it is and compute a safe, comfortable trajectory.

The platform work is facilitated by the company's DriveWorks software suite, which includes tools, libraries, and modules designed to aid development and testing of autonomous vehicles.

DriveWorks enables sensor calibration, the acquisition of situational data, data synchronization, and the processing streams of sensor data through a complex pipeline of algorithms running on all of the DRIVE PX 2's processors.

Software modules are included for all aspects of the autonomous driving pipeline, from object detection, classification, and segmentation to map localization and path planning.

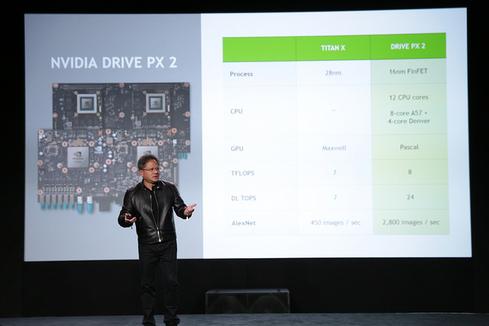

The DRIVE PX 2 sports two next-generation Tegra processors plus two next-generation discrete GPUs based on the Pascal architecture, all of which deliver up to 24 trillion deep-learning operations per second.

For map localization and path planning, the system can compare real-time situational awareness with a known high-definition map, enabling it to plan a safe route and drive precisely along it, while adjusting to constantly changing circumstances.

In addition, the platform's multi-precision GPU architecture is capable of up to 8 trillion operations per second. That ability enables partners to address numerous autonomous driving algorithms, including sensor fusion, localization, and path planning. It also provides high-precision compute when needed for layers of deep learning networks.

Its deep learning capabilities also enable it to learn how to address the challenges of everyday driving, such as unexpected road debris, erratic drivers, and construction zones, while also addressing problems such as recognizing poor weather conditions, including rain, snow, and fog, and difficult lighting conditions like sunrise, sunset, and extreme darkness.

The DRIVE PX 2 can process the inputs of 12 video cameras, plus that of lidar, radar, and ultrasonic sensors, fusing them together to detect objects, identify them, determine where the car is relative to the world around it, and then calculate an optimal path for safe travel.

Since NVIDIA delivered the first-generation DRIVE PX last summer, more than 50 automakers, suppliers, developers and research institutions have adopted the AI platform for autonomous driving development, including Ford, Audi, and BMW.

[Read what Ford is announcing at 2016 CES.]

Swedish carmaker Volvo plans to use the computing engine to power a fleet of 100 Volvo XC90 SUVs that will start to hit the road next year in the Swedish carmaker's Drive Me autonomous-car pilot program.

The cars will operate autonomously on roads around Gothenburg, the carmaker's hometown, and semi-autonomously elsewhere.

(Image: Nvidia)

In addition, the company offers an end-to-end solution consisting of DIGITS, a tool for developing, training, and visualizing deep neural networks, and DRIVE PX 2 for training a deep neural network and deploying the output of that network in a car.

The DRIVE PX 2 development engine will be generally available in the fourth quarter of 2016. It will be available to early access development partners in the second quarter.

**Elite 100 2016: DEADLINE EXTENDED TO JAN. 15, 2016** There's still time to be a part of the prestigious InformationWeek Elite 100! Submit your company's application by Jan. 15, 2016. You'll find instructions and a submission form here: InformationWeek's Elite 100 2016.

About the Author(s)

You May Also Like