A Lesson in Nightshade and Defenses Against GenAI Content Abuses

Is there a way to find truth and protect art amid the digital mirages AI produces, sometimes blatantly without consent?

Ownership and control of original content, as well as trust in the authenticity of what appears online, came into doubt after generative AI (GenAI) hit the streets.

While the technology offered a sort of democratization of content production, it also raised the ire of creatives whose original material was used to train GenAI without their consent. Furthermore, the production of images and other material via GenAI continues to escalate disbelief in the authenticity of all digital content. Moreover, it can fuel propaganda and smear campaigns.

Advocates for GenAI face a battle with creators over content control while also face demands from the public for authenticity.

Defenders Assemble

When it comes to content control, there seem to be two macro tactics to stop AI from feeding on data: block the bots from websites or “poison” content to confuse AI.

One way of restricting scraping includes the Robots Exclusion Protocol, which can be included in the coding of websites to instruct bots and crawlers on what parts of a site they can and cannot look at. This assumes the creators of the bots care about compliance with such voluntary protocols.

Content distribution networks might step in with bot detection resources. For example, Cloudflare says its users can specify which types of bots that they want to allow or block from crawling their websites, including crawlers for AI.

Scraping: An Old Issue with New Concerns

David Senecal, principal product architect, fraud and abuse, with Akamai Technologies, says prior botnet scraping was, naturally, not as advanced as seen today and evolved into more sophisticated tools as skilled engineers were hired to develop the further. “They were mainly based on some advanced scripts that somebody would write to kind of mimic the way requests would look coming from a regular browser,” he says.

More recently, the push to provide business intelligence includes data collection frequently conducted by scraping websites, Senecal says. “They can use more advanced AI and potentially generative AI to process that data to be able to provide the intel that they are sending to their customer.”

In addition to businesses not knowing what is done with the data or long-term impact scraping might have on their operations and strategy, he says the bots themselves could cause some stability issues, skew understanding of user conversion rates, and affect the organization’s bottom line.

A software arms race may be brewing on the scraping front. Software such as Kudurru from Spawning in development to actively block AI scrapers. Datadome offers scraping protection software to thwart large language models.

Nightshade: A Poison Countermeasure to GenAI

For those who want to deter GenAI from feeding off their original content, the Nightshade Team at the University of Chicago developed tools to stymie efforts to use artists’ works. Glaze is a defensive measure to thwart style mimicry while Nightshade is an offensive, “poisoning” measure to disrupt scraping without consent.

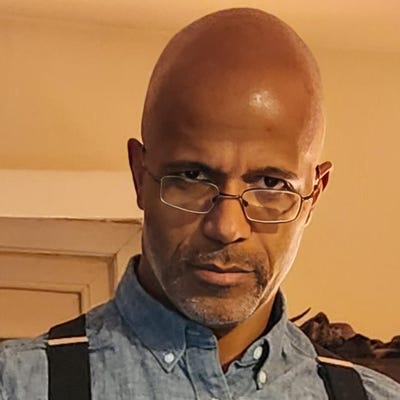

Ben Y. Zhao, Neubauer professor of computer science at the University of Chicago, a lead on the Nightshade Team, has worked in security and machine learning systems. While working on a rather dystopian project to see what would happen if someone trained unauthorized facial recognition models based on social media and everyone’s increasing internet footprint. “As we were preparing to finalize our research paper, The New York Times article broke about Clearview.ai and how that was already happening,” he says, citing the privacy uproar that ensued about law enforcement’s use of such technology to pursue suspected criminals.

As a result, the project Zhao was working on got a fair bit of media attention as well as interest from those in the public who wanted to download the tool, Fawkes, the team had written to basically obfuscate selfies images to disrupt AI facial recognition models. Fawkes is a reference to the Guy Fawkes mask made famous by the “V for Vendetta” graphic novels and movie.

Not long after Fawkes got on the radar, artists reached out in December 2022 to see if it could be used to protect art. “At the time we were completely clueless about this entire thing that was happening with image generation, and so we were very confused,” Zhao says.

That all changed when Midjourney and other GenAI image programs made headlines and got the attention of the masses.

The team got back in touch with the artists who had reached out, and then they were invited to join an online town hall hosted by the Concept Art Association. “It was really eye opening,” Zhao says. “It was more than 500, I believe, professional artists on a single Zoom call, just openly talking about how generative AI had turned their lives upside down, disrupting their work, stealing their artistic styles, and basically wearing them like skins without their permission.”

During the town hall, he asked the artists if they would be interested in a technical tool to protect their works. One initial response rejected the idea, wanting to see laws, regulations, and solid, longer-term solutions to address the problem. “I think that that came from Greg Rutkowski, who had been one of the most well-known artists who was really targeted in this way,” Zhao says, referring to the high frequency Rutkowski is referenced in GenAI prompts to produce images that milk from his original works.

Other artists on the call, Zhao says, such as Karla Ortiz, were more receptive to exploring a technical tool protect their art. “After that call, I got in touch with Karla and with her tried to understand what kind of tools would be meaningful,” Zhao says. “We designed what became eventually Glaze.”

That led to tests, user studies, and putting the tool in front of some 1,200 professional artists to see what alterations to their work they would tolerate to deploy Glaze’s protections. Glaze focuses on protecting individual artist’s works without affecting GenAI base models, Zhao says.

The team announced the availability of Glaze last March for download and by summer 2023, it reached one million downloads. A web service version soon followed, and the team got to work on the successor tool -- Nightshade.

“Artists needed something to push back against unethical, unlicensed, scraping of content for training,” Zhao says. Nightshade is a tiny little poison pill that can be put into art so that cumulative training on that type of sample spoils GenAI. “Once it adds up to a certain point, it starts to really disrupt how AI models get trained, how they learn about the association between concepts and visual cues and features and models start to break down.”

The first version of Nightshade became available in January and saw some 250,000 downloads within the first five days, he says.

Where Glaze is a smaller scale defense mechanism for individuals, Nightshade is aimed at the growing use of AI model trainers that might grab art from any source. “That’s something that, up until now, there was not a single solution for,” Zhao says. “If you are content owner -- doesn’t matter whether you’re an artist, or a gaming company, or a movie studio -- you have zero protection whatsoever against someone who is going to take your content and shoot it into the pipeline. So, it doesn’t matter if you’re Disney or, a gaming company or a single, part-time, hobby artist. It’s all the same.”

Opt-out lists exist to ostensibly keep content away from GenAI, but they are optional and might still be disregarded, he says. “It’s completely unrealistic.” For instance, Zhao says a GenAI company might request all the information about an image that should not be crawled, and the artist would simply have to hope they do not use the image anyway.

“There’s absolutely nothing enforcing that at all,” he says. “They can literally turn around, download your image, put it into their training pipeline, and you can’t prove a damn thing.”

Nightshade, he says, is designed to give copyright some teeth. If AI ingests enough digital poison, it might misunderstand what a cat looks like, and instead generate chimeric images with cow hooves and believe it created a feline. “Hopefully at some point they will actually think about licensing content for training and actually paying people for their work rather than just taking it and for all intents and purposes stealing it for commercial purposes,” Zhao says.

There is a vocal population of GenAI fans and users, including those who see themselves as artists by using prompts to produce images -- images rely on training from original works. While proponents of GenAI contend that Nightshade can eventually be defeated, Zhao welcomes the development of more “poisons” by others to make it collectively more worthwhile to work with artists.

“At some point the cost of detecting and trying to remove these kinds of poisons will continue to grow and it will get so high that it will actually be cheaper to license content,” he says. “The whole point here is not to say we’re trying to just break these models. That’s not the end goal. The end goal is to make it so expensive to train on uncurated content that’s unlicensed that people will actually go the other way -- that people will just pay for licensed content. I think that’s good for everyone.”

Seeking A Measure of Truth Among Digital Mirages

It may be impossible to banish GenAI images completely to the void, but additional measures can be taken to identify visual content produced through such means. For example, Adobe Content Credentials is an information layer for digital images. Adobe refers to Content Credentials as a sort of “nutrition label” to indicate the creation and modification of images published online.

Adobe also co-founded the Content Authenticity Initiative and says it has been working with the Coalition for Content Provenance and Authenticity to help halt misinformation that stems from altered digital images. According to Adobe, Microsoft introduced Content Credentials to all AI-generated images produced through the Bing Image Creator. That will add such details as time and date of image creation. There are also plans, according to Adobe, for Microsoft to integrate Content Credentials into Microsoft Designer, an AI-powered image design app.

Escalating concerns about authenticity and bot access to content, at least, have reached the ears of some GenAI developers. OpenAI is introducing digital watermarks to images generated through its resources to indicate their creation through AI as part of the push to revive trust in digital content. Last year, OpenAI gave websites a way to opt out of letting GPTBot crawl sites, offering a way to prevent content, data, and code from being scraped to train AI.

Still, with GenAI built on the ability to ingest data and code to produce content via prompts, the tug-of-war for access to and control of original content may be pivotal to the technology’s future.

About the Author

You May Also Like