Tape As A Long-Term Storage Option

Datacenters are being asked to archive data for longer periods of time than ever. Here's why tape is an ideal solution for long-term data storage.

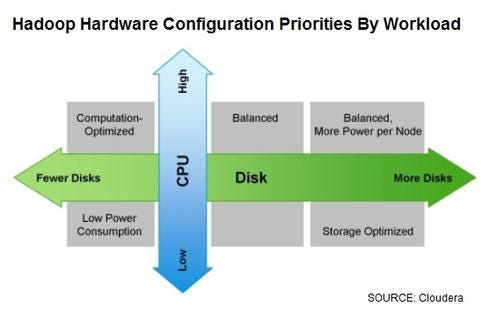

10 Hadoop Hardware Leaders

10 Hadoop Hardware Leaders (Click image for larger view and slideshow.)

When I speak at seminars on data protection, the subject of retention always comes up. Datacenters of all sizes are increasingly being required to retain data for extended periods of time. The concerns related to storing data for 15+ years often center on cost effectiveness and confidence that the data will remain readable 15 years in the future.

Does reliable retention kill tape?

During these discussions, many people assume that tape is not an option for long-term data storage. My response? To quote the infamous Yosemite Sam, "Not so fast, rabbit!" Tape technology can meet a 15-year retention requirement. Here's why:

First, tape's extreme cost advantage over disk (even with all the data-efficiency technologies) allows you to make copies of data on two or three different pieces of media. That way, if one -- or even two -- pieces of media fail, the cost to keep extra copies is nominal.

Second, some tape libraries and many archiving solutions have the ability to periodically scan tape media to make sure they are readable and the data on them is valid. If an error or data corruption is detected, the entire tape or the corrupted data can be copied to a new tape.

[You've maxed out your datacenter -- what's next? Read Scale-Out Storage Has Limits.]

Third, migration to new tape technology can be similarly automated. Archive programs can move one or more copies to new media -- for example, to a fresh tape or next-generation media -- to either refresh the tapes or take advantage of greater tape capacities. And tape has a proven track record of new drives being backward-compatible with prior generations.

What about disk archive?

For these reasons, tape is an ideal storage target for data that requires long-term retention. On the other hand, don't go to the other extreme and start throwing disk out -- disk certainly has a role. But beware the vendor that claims you need only one or the other. A scale-out disk archive that can support multiple node types (since hard disks can fail or change over time) is ideal for mid-range data retention -- say, a few months to a few years. This data, or a portion of it, is most likely to require quick recovery.

Datacenters are being asked to retain data for longer periods of time than ever before, and a well designed archive is becoming an essential part of any data retention strategy. An effective strategy should offer the ability to retain data for a long time with full confidence that it will be readable when needed.

When it comes to managing data, don't look at backup and archiving systems as burdens and cost centers. A well-designed archive can enhance data protection and restores, ease search and e-discovery efforts, and save money by intelligently moving data from expensive primary storage systems. Read our The Agile Archive report today. (Free registration required.)

About the Author

You May Also Like