JPMorgan Chase’s Sandhya Sridharan Talks Empowering Engineers

One of the biggest banks in the world, in terms of assets, maintains a DevSecOps mindset, explores AI, and cuts out the noise to encourage its engineers to explore.

At a Glance

- JPMorgan Chase has more than 35,000 software engineers developing its solutions.

- Sridharan and her team built a developer platform to increase developer productivity and accelerate public cloud migration.

- JPMorgan Chase taking a careful approach to implementing AI strategy including, GenAI.

As far as multinational financial services firms go, JPMorgan Chase has a significant footprint when it comes to its engineers and the operations they serve. JPMorgan Chase has more than 35,000 engineers building solutions as it serves a customer base that includes millions of households and small businesses and $3.9 trillion in assets.

The combination of regulatory scrutiny and customer demand means the firm’s engineers need access to resources to help them operate quickly and efficiently while helping the firm meet compliance expectations.

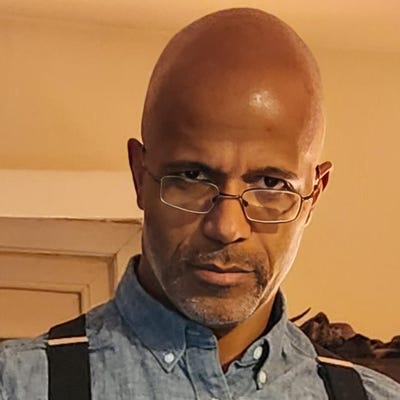

Sandhya Sridharan, global head of engineer’s platform and experience at JPMorgan Chase, and her team built an integrated developer platform to help them deploy solutions effectively, increase developer productivity, accelerate public cloud migration, and enable modern engineering practices. She spoke with InformationWeek about challenges and considerations that come into play assisting engineers within a massive financial organization.

You’ve adopted a DevOps mindset, and your development is at significant scale -- before we dive into fine details, where do you start to plan to operate at such a level? Where do you look first to empower engineers to then service your customers?

Empowering the engineers to ensure that they have that full end-to-end experience -- how do we do that? In multiple ways. First, what are some of the best industry practices, solutions, tooling? The second is actually listening, having those customer advisory boards, senior practitioners, or product cabinets to understand not just their business priorities, but also understand where their friction points are, what are they trying to do, what their ecosystem looks like, and so on.

When I was actually a developer, environments were so simple. You just write code; you check it in as a build system that kicks off and then there is a separate team that goes and packages your software and then ships it to customers. If you look now, there’s a cognitive overload in terms of expecting engineers to know multiple technologies, infrastructure, networking, and so on. How do we abstract all of that complexity is where we start. How can we build a more self-service model? The more engineers that I speak to, other than -- “I want a reliable system; I want this thing that goes fast; I want to abstract complexity of controls,” -- apart from that, all of their requirements are slightly different. What one considers modern, in terms of language support or infrastructure support, it’s different for another engineer. Having the capability to do self-service, that flexibility is critical.

Laying down that foundational layer for a platform is very important. Definitely it is industry best practices, best of breed tooling, what are the engineers trying to solve for, what are the business priorities and then how can we build a platform that’s not just reliable, scalable, secure, but also flexible enough for engineers to have self-service capabilities. That’s how I go about building out the foundational layer to ensure that anything that we build upon that stays strong.

How do you introduce and maintain DevOps in a meaningful way for engineers at such scale?

DevOps is more of a mindset or a culture. There is definitely a platform and a tooling aspect of that that enables that culture and mindset. First and foremost is, right from the planning and ideation phase, what are some of the best practices, principles that they need when they’re creating their epics. We call it the planning and ideation phase, leading to when they’re writing and committing code, what capabilities they have, and also making sure security is built in. More than DevOps -- DevSecOps.

Ensuring that the teams have the training, the enablement, and the awareness, but also from a tooling perspective provide those guardrails, provide that safe environment for them to progress from, like a developer in the inner loop where they develop their software onto a dev or a staging system. And before they go to production, every step of the way, the platform kind of prods them, saying, “Hey, you committed a new piece of code. I’m going to kick off security scanning,” for example. “I’m going to kick off code coverage so in the process I’m going to run all your unit tests, integration tests, and so on.”

With DevSecOps, sometimes there’s the push and pull of security wanting to slow things down. Are there any particular tricks for that? How do you go about finding that equilibrium, so all the different parts are operating to their potential but also not slowing things down? How do you find that kind of balance?

There’s always a very interesting tug of war. When you have such a wide scale and variants in the systems -- there are some teams who want a lot more freedom just until they go to production. There are teams who want to do checks well before it makes it into a pull request, so kind of finding the balance. We have a policy service. There’s a firmwide policy that absolutely everyone needs to abide by. For example, no V1, V2 vulnerabilities in production. At what point do we block them depends on the policy that the line of business can build upon, by the platform’s policy.

For example, one line of business or one set of applications will go -- before you even get into a dev or a staging environment -- “I want all the scanning to be complete, code coverage to be done.” They can go and just add a flag to our policy service that says, “cannot do even a single deployment until everything is passed,” whereas other teams can say, “I’m OK with going up to UAT,” but the platform will not allow them to make any changes to that when they go to production. You have to abide by certain standards before they go to production.

You have to meet all of the control objectives for SDLC that we have agreed upon in terms of regulatory requirements. You have to meet certain peer reviews, code coverage criteria before production.

Are there additional considerations that come into play when you’re looking at the factors of scale and complexity? For the size of this operation, are there other considerations that come into play?

The variants in the ecosystem. Name a language -- we have Java, Golang, C++, C#, all of that. We deploy to public cloud, private cloud, VSI, PSI, and also to some large heritage systems as well. We are a highly regulated industry, so JPMC [JPMorgan Chase] is one of the most globally, systemically important banks, so we take that very seriously. For us, that’s a big obligation. We try to automate because manual process is very, very error prone.

Going back to my earlier comment about, how do we make developers excited about the platform, is they use the platform then all of this they get for free. A lot of the regulatory things, even though they’ve been abstracted, the complexity is abstracted from them. Just by using the platform, they get all the guardrails, the safe environment. We know that the code that goes to production has met all of the regulatory [needs]. So definitely more than the scale and the complexity -- the endpoint providers that we deploy to, the multitude of that, the regulatory environment is a big factor.

How do you gauge productivity or success? What are the ways you support engineers in terms of deployment and delivery?

One is definitely around best practices, training, and we have developer forums. We have what we call the epics my line of business, engineers’ platform and integrated experience. We have the concept of a tech bar, so many ways that we enable them. The best success for us is in making sure that the platform is intuitive enough that they go to the secondary sources to fine tune what they’re doing. Right from the platform, right from your planning and creation phase you build, integrate testing solutions to deploy and operate, including the full gamut of end-to-end observability, all of the observability solutions, all of the testing solutions that’s available, and code quality tools like Sonar in the mix all of the best of breed security scanning tools.

All of that is built into the platform and a lot of the best practices are also built into the platform. For example, if the build normally takes maybe about few minutes, then proactively the platform will highlight to them these are possible ways you can optimize your build. Same thing around deployment if they’re deploying and then as part of our evidence, if they’ve had some escaped defects or incidents, how do we catch that and highlight that before they go to production? These are the ways, the tooling in terms of capabilities that we offer from the platform, as well as some kind of best anomaly detection when things are going wrong so they can stop and relook at why all of a sudden, this particular build took a lot longer than the previous build. Giving them more analytical insights so they can make their decisions earlier, much earlier before they find it in production.

How does this all factor into the broader IT strategy at JPMorgan Chase, including for such things as cloud migration and modernization?

Very integral part of that. Whether it is accelerating development, the overall modernization transformation, protecting the firm. We have various initiatives in the firm, and I think the developer tooling on the platform is critical because it enables a lot of those functions. I gave several examples around protecting the firm. When we consume open-source libraries, are we consuming open source that is fully scanned and vetted for internal use? Do we have a full life cycle management around that?

What we build internally, as well as third party software that we consume, all of that goes through that lens of threat modeling and vulnerability detection and lifecycle management of that. And then the same thing with modernization. We are critical in terms of deploying to public cloud. We enable that automatic, continuous deployment to a public cloud, and then and if you’re a microservice as well, how do you track all your dependencies and do parallel deployments to production? Many applications have the multi-strategy for modernization, whether it’s to rehost is one strategy, because there’s benefits to it; there is replatforming -- you can keep your application code, but maybe you’re changing just your database and taking advantage of a more modern database; and then rewrite.

If you look at all three pillars of these modernization, of course there’s a fourth and a fifth element to it, but if I look at the three core things, the tool chain is very integral as to deploying. Whether you’re deploying to a new data center or a public cloud, and then provisioning your infrastructure, for example, because it all goes through the platform, and then providing that full evidence, the full traceability across from when the code was written or a code was ingested, all the way to when it got deployed. So, it’s a very integral part of the modernization journey.

What kinds of external factors do you pay attention to? How do you filter out the noise with new technology emerging, including AI?

That’s a hard thing, especially at a scale at which we operate because there’s plenty of attractive tools and the vendors do a great job marketing and selling, especially to JPMC. I think that first and foremost is keeping track of our strategy and making sure we have that laser focus on our strategy, vision, and then how do we create that frictionless environment for developers and how is this particular tooling or solutions critical? I think it’s very important to keep that side.

Of course, being highly regulated, how secure is that solution and capabilities? There’s always plenty of tools, there’s pros and cons across the industry and especially -- you refer to AI -- it’s been a pretty well-known fact that AI, especially now more GenAI and LLMs, can help accelerate engineers, whether it’s coding assistance or everything else. Lori Beer, our global CIO, shared on our investor day that we are exploring what’s out there. We are exploring other opportunities, but we have to be responsible when we implement any AI strategy including what we want to do with GenAI across various applications.

It’s a very exciting space and our engineers are super excited of the potential, and I think they know more is to come in this area. Then we have this across, whether it’s securities of these ML models or whether it’s open-source security, and then the whole artifact management space even in the database space with the vector databases -- there’s a lot of evolution happening. We like to innovate, so we want to look at what’s new out there so that innovation never stops. Constantly innovating, constantly learning, but what gets used in terms of production, where does the production code get used?

Are we keeping the firm safe by using that? Is it going to allow for the multiplier factor in terms of experience? That is some of the thinking that goes in before we actually take that new piece of technology and put it in for production use.

About the Author

You May Also Like