Eavesdropping On A New Level

MIT, Microsoft, and Adobe research team demonstrate how to capture sound using video images of objects. Yes, plants will parrot what you say with more fidelity than parrots, under the right conditions.

If a tree falls in a forest and no one is around to hear it, does it make a sound? This trite question masquerades as a conundrum, when it's really just vexing because of its vague construction and internal contradiction.

Nonetheless, this question can now be answered if there's high-resolution video footage of the toppling tree, even without an audio track.

Researchers from MIT, Microsoft, and Adobe have shown that they can recover sound from video imagery, a technique that promises to pique the interest of intelligence agencies and forensic investigators. While the technique will need to be refined to be practical outside the laboratory, it has the potential to enable retroactive eavesdropping at events that were videoed with sufficient fidelity.

Sound, of course, is how we describe vibrations we receive and perceive, typically through the air. When a sound strikes our eardrums, our eardrums move and we hear the sound (assuming the absence of impairment). And when sound waves strike an object, like a bag of potato chips, it too moves, imperceptibly.

But these vibrations can be perceived with a video camera, as Abe Davis (MIT), Michael Rubenstein (MIT/Microsoft), Neal Wadhwa (MIT), Gautham Mysore (Adobe), Frédo Durand (MIT), and William T. Freeman (MIT) have demonstrated and documented.

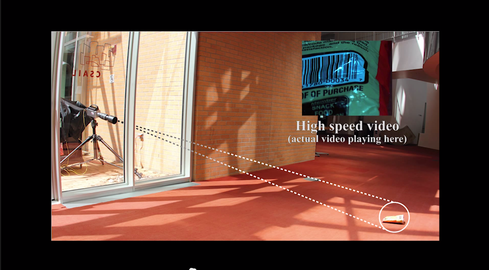

In a paper to be presented in mid-August at SIGGRAPH 2014, the researchers describe how they filmed a series of objects using both a high-speed video camera and a consumer video camera and were able to reproduce sounds that had been playing near objects using only video information -- the object's minute vibrations in response to the impact of sound waves.

The technique "allows us to turn everyday objects -- a glass of water, a potted plant, a box of tissues, or a bag of chips -- into visual microphones," the paper explains. "Remarkably, it is possible to recover comprehensible speech and music in a room from just a video of a bag of chips."

The science is similar to that employed by laser microphones, which use light to measure sound vibrations. But laser microphones are an active form of surveillance. Analyzing objects for vibrations can be done after the fact, given video of sufficient quality and source audio of sufficient volume.

The experiment focused on high-speed video -- up to 6,000 frames per second -- but the researchers also had success retrieving audio from video captured on consumer-grade video cameras shooting at 60 frames per second.

US intelligence presumably already has more sophisticated eavesdropping technology. A decade-old patent application arising from work at NASA, "Technique and device for through-the-wall audio surveillance," describes a way to listen in on even soundproofed locations by using "reflected electromagnetic signals to detect audible sound." But MIT's Visual Microphone technique could become a useful addition to an already formidable set of surveillance tools.

Take a look at how it works.

A recent Nature documentary, "What Plants Talk About," explored plant communication. But plants will parrot what you say with more fidelity than parrots, if filmed under the right circumstances while exposed to sounds equivalent to an actor speaking on a stage or louder.

The effect of sound vibrations is subtle. Plant leaves exposed to a recording of "Mary Had a Little Lamb" were moved by less than one-hundredth of a pixel. That's why you need a good video camera to turn objects into microphones.

The researchers filmed their videos using a Phantom V10 video camera, capable of filming at more than 150,000 frames per second at 96 x 8 resolution, or 480 frames per second at full 2,400 x 1,800 resolution. The major limitation appears to be image resolution: The experiment used a 400 mm lens to recover sound from 3 to 4 meters away. Capturing enough visual information to get sound from more distant objects should require even more powerful optics. Perhaps we will start seeing surveillance agencies turning telescopes toward Earth.

Objects reflect sound in different ways. A bag of Utz crab chips was moved the most by sound. A teapot, on the other hand, didn't respond very well.

A high-speed video of a pair of earbuds provided enough information for the Shazam app to accurately identify the song playing through them.

Video artifacts caused by rolling shutters of CMOS sensors in consumer video cameras allowed the researchers to recover sounds at frequencies several times higher than the frame rate of the video. To hear the actual sounds recovered, visit the research project webpage.

Video artifacts caused by rolling shutters of CMOS sensors in consumer video cameras allowed the researchers to recover sounds at frequencies several times higher than the frame rate of the video. To hear the actual sounds recovered, visit the research project webpage.

-

About the Author(s)

You May Also Like