Hadoop At 10: Doug Cutting On Making Big Data Work

Doug Cutting, co-creator of Hadoop, takes time out with InformationWeek to talk about the history of the Big Data project and share his views on what's yet to come.

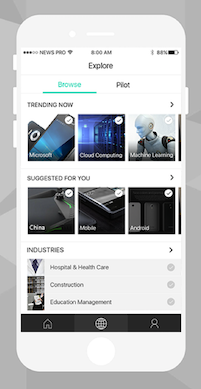

10 Cool Microsoft Garage Projects You Didn't Know About

10 Cool Microsoft Garage Projects You Didn't Know About (Click image for larger view and slideshow.)

Doug Cutting, chief architect at Cloudera, and Mike Olsen, the company's chief strategic officer and cofounder, were having dinner with their families at a restaurant on Jan. 28, during which Cutting blew out a candle and shared some champagne in honor of Hadoop's 10th anniversary.

Cutting developed Hadoop with Mike Cafarella as the two worked on an open source Web crawler called Nutch, a project they started together in October 2002. In January 2006, Cutting started a sub-project by carving Hadoop code from Nutch. A few months later, in March 2006, Yahoo created its first Hadoop research cluster.

In the 10 years that followed, Hadoop has evolved into an open source ecosystem for handling and analyzing Big Data. The first Apache release of Hadoop came in September 2007, and it soon became a top-level Apache project. Cloudera, the first company to commercialize Hadoop, was founded in August 2008. That might seem like a speedy timeline, but, in fact, Hadoop's evolution was neither simple nor fast.

The First Step Is Always the Hardest

The goal for Nutch was to download every page of the Web, store those pages, process them, and then analyze them all to understand the links between the pages, Cutting recalled. "It was pretty clunky in operation."

[What's ahead for your career? Read 10 Best Tech Jobs For 2016.]

Cutting and Cafarella only had five machines to work with. Many manual steps were needed to operate the system. There was no built-in reliability. If you lost a machine, you lost data, Cutting said.

The break came from Google, when it published a paper in 2004 outlining MapReduce, which allows users to manage large-scale data processing across a large number of commodity servers. "It took us one year or so to get up to speed," Cutting said.

Soon, Cutting and Cafarella had Nutch running on 20 machines. The APIs they had crafted proved useful. "It was still unready for prime time," Cutting said. Nutch was not scalable or stable.

Cutting joined Yahoo in January 2006, and the company decided to invest in the technology -- particularly the code that Cutting had carved out of Nutch, which was called Hadoop, named after his son's stuffed elephant.

By 2008, Hadoop had a well-developed community of users. It became a top-level Apache project, and Yahoo announced the launch of what was then the world's largest Hadoop application. Cloudera was founded in August 2008 as the first company to commercialize Hadoop.

"We were not going to depend on any company or person," said Cutting about the open source approach. "We got to have technology that is useful."

In a blog post celebrating Hadoop's 10th anniversary, Cutting talks about working in open source for the first time in 2000, when he launched the Apache Lucene project. He wrote:

The methodology was a revelation. I could collaborate with more than just the developers at my employer, plus I could keep working on the same software when I changed employers. But most important, I learned just how great open source is at making software popular.

Compelling Economies, Compelling Uses

At first glance, Hadoop seems reliant on an inversion of thinking. It is easier to move the app to the data than the data to the app. That is misleading. It comes down to the economics of operation.

Start with the hardware. The personal computer created a system with a decent CPU, memory, and hard drive to deliver "the most economic way to purchase a unit of computation," Cutting told InformationWeek. "The challenge is taking a lot of these, and hooking them together."

He added: "Local drives are faster than networks." The I/O bandwidth inside the server or PC will always exceed anything a network can do. Therefore it makes more sense to let data reside locally, and move the app to the data. "It's mostly physics and economics," Cutting said.

Here, Cutting shares more about Hadoop's philosophy:

Now that Hadoop has become more commonplace, two types of users have emerged. The first are people "who find a problem they cannot solve any other way," Cutting said.

As an example, Cutting cited a credit card company with a data warehouse that could only store 90 days' worth of information. Hadoop allowed the company to pool five years' worth of data. Analysis revealed patterns of credit card fraud that could not be detected within the shorter time limit.

The second type of user will apply Hadoop to solve a problem in a way that had not been technically possible before, according to Cutting. Here he cited the example of a bank that had to understand its total exposure to risk. It had a retail banking unit, a loan arm, and an investment banking effort, each with its own backend IT system.

The bank could use Hadoop to "get data from all its systems into one system." Cutting said. From there, IT could normalize the raw data and experiment with different methods of analysis, "figuring out the best way to describe risk," he explained. In either case, users gained "domain knowledge," Cutting said.

"They know the problem they have, and potentially a better understanding of the data." Asking the right questions is "not that hard," he said. The challenge is learning how to use the new tools that enable Hadoop to function.

Teaching the Task

Cloudera, as well as its big data competitors, are all trying to expand their pool of users by offering courses through a variety of channels that teach how to use Hadoop and handle big data. Concurrently, vendors are looking for ways to integrate tools that IT people are already familiar with in order to interface with big data applications as another approach to shorten the skills gap, Cutting noted.

Even universities are offering courses in Hadoop. While this will not produce a certified data technician, it does yield a computer science graduate who is familiar with Hadoop. "It becomes a 'new normal' set of skills," Cutting said. It will take a long time to bring the IT industry up to speed on Hadoop, since this technology is still new to many, he noted.

Hadoop adoption will also be a long process. Many Fortune 500 companies are running Hadoop "in a few small corners," Cutting said. Companies such as Google, Facebook, LinkedIn, and Yahoo have already moved all their data to Hadoop. Banks and insurance companies "are going to take a while to move," he said.

Coming from Yahoo, Cutting took for granted the open source culture in which everyone shared software. Traditional corporations relied more on relational database management systems, "which we did not have much use for," Cutting said.

Companies typically hang on to legacy systems so long as they are useful and paid for, but the transition to Hadoop's open source approach is underway. Cutting sees the irony: Eventually, he said, all companies will be using the same tools as the Web outfits.

What have you done to advance the cause of Women in IT? Submit your entry now for InformationWeek's Women in IT Award. Full details and a submission form can be found here.

About the Author

You May Also Like